Welcome back, Spectrum Visionaries.

AGI (artificial general intelligence) is the idea that AI could one day learn and perform any task a human can, not just the ones it was trained for. It is one of the most debated questions in the field right now, and today's analysis asks whether the tests used to track that progress are measuring the right kind of intelligence.

This issue also explores surgical robotics getting its first open dataset, touch as a new sensing layer for manipulation, and why hazardous waste sorting is getting an AI-guided system for the first time.

THE ANALYSIS

Artificial General Intelligence Through Better Testing

Source: arcprize.org

Artificial General Intelligence (AGI) progress is mostly reported through benchmark scores. Models get harder tests, scores go up, and the field moves on. But that only works if the tests are measuring the right thing.

ARC’s (an organization focused on measuring and advancing Artificial General Intelligence) core argument is that intelligence is not the same as task skill and that the field may still be optimizing for the wrong feedback signal.

The Benchmark Problem

Many benchmarks reward performance on prepared domains. That makes it easier for models to accumulate task-specific skills without demonstrating real adaptability. ARC argues that "harder" is not the same as "more general".

Skill can be purchased with more training data, more compute, stronger priors, and better scaffolding. A model that scores highly on a benchmark may be showing mastery of that test, not the ability to generalize to something new.

What Intelligence Actually Is

In 2019, researcher François Chollet proposed a different measure: “Intelligence is not what a system knows but how quickly it can learn something new it was never trained on.”

A system that memorizes the right answers is not the same as a system that can figure out the right answers in a novel situation. By that definition, AGI is not a smarter model but a system that learns the way humans do.

What a Better Test Looks Like

ARC Foundation built a benchmark series around that definition. Their most recent version, ARC-AGI-3, consists of hundreds of original environments and thousands of game-style levels.

Agents receive no instructions, no rules, and no stated goals. They have to explore, infer what matters, build a world model, and adapt over time.

Scoring is based on action efficiency relative to humans. An ordinary human with no prior training solved it 100%, but frontier models scored only 0.26%.

What the Gap Reveals

ARC-AGI-1 exposed the limits of deep-learning-era pattern recognition, ARC-AGI-2 pushed reasoning models much harder, and ARC-AGI-3 shifts the test again, toward interactive adaptation in novel environments.

The frontier may be getting better at static reasoning, but AGI-like performance may depend on something harder: learning how a new environment works without being told.

Why It Matters: The models getting better at benchmarks and the models getting closer to human-like generalisation may not be the same thing. The gap that matters most right now is not between human and AI performance on any specific task, but how quickly and accurately these models can adapt to something new.

Timeline: ARC-AGI-3 launched on March 25, 2026. It is the first fully interactive benchmark in the ARC series, and as of launch, humans score 100%, and frontier AI scores 0.26%, meaning no system has yet demonstrated the learning efficiency ARC uses as its bar for AGI.

THE AI UPDATE

Surgical Robotics is Getting Its First Open Training Dataset

Open-H, unveiled at GTC, is the world's largest open dataset for healthcare robotics, built from real surgical video, robotic telemetry, and procedure data contributed by CMR Surgical and partner institutions. It underpins Isaac GR00T-H, the first open vision-language-action model built specifically for surgical robotics.Unlike warehouse and factory robotics, surgical AI has had no shared foundation to train from. Open-H changes that by making real operating room data available to the broader research community for the first time.

Timeline: GR00T-H is research-stage as of 2026, with clinical deployment of AI-assisted surgical systems targeting the late 2020s as real-world validation, workflow integration, and regulatory clearance develop.

AI Manipulation Is Shifting From Vision-Only to Touch-and-Vision

Sanctuary AI demonstrated a robotic hand that can reposition objects it has never encountered before, using touch alone to recover when a grasp goes wrong. The hand uses miniaturised hydraulic valves instead of electric motors, giving it the force sensitivity needed to detect and correct slip in real time, without resetting.

Most robotic manipulation relies on vision to plan and correct movement. This demonstration marks an early shift toward touch as the primary sensing layer for fine manipulation, the kind factories need but cameras alone cannot reliably deliver.

Timeline: Demonstrated April 2026, simulation-to-real transfer stage. Industrial deployment through Magna International partnership targeting the late 2020s as dexterity generalises beyond structured object categories.

Hazardous Waste Is Getting Its First AI-Guided Sorting System

Source: gov.uk

The UK's Nuclear Decommissioning Authority launched Auto-SAS, a four-year program to sort and segregate radioactive waste autonomously at licensed nuclear sites, starting at Oldbury. The system uses vision AI to classify waste by radioactivity level and physical characteristics, routing lower-risk material away from more expensive disposal pathways.

Sorting radioactive waste has long depended on human handling in environments where mistakes are costly and exposure risk is real. Auto-SAS is an early attempt to move that judgment into a vision-guided system.

Timeline: Auto-SAS targeting initial active deployment at Oldbury through 2027 to 2028 as AI classification models and radiation-hardened systems mature for licensed site conditions.

THE MARKET

Simulation Software Market to Reach $70.8B by 2033

Growth

The global simulation software market reached $26.58 billion in 2025 and is projected to reach $70.78 billion by 2033 at a 13.0% CAGR. Demand is expanding across automotive, healthcare, industrial systems, and AI-enabled devices as virtual testing replaces physical prototyping across development workflows.Pricing

ABB's integration of NVIDIA Omniverse into its RobotStudio platform aims to reduce setup costs by 40% by eliminating physical prototypes through virtual validation. Simulation allows teams to generate rare and dangerous scenarios that cannot be safely or affordably staged in the real world.Adoption

Automotive and manufacturing is the largest end-use segment, driven by virtual testing, safety validation, and the growing complexity of electric and autonomous vehicles. Industrial and healthcare use is also rising, while on-premise deployment remains important where security and data control matter most.

Takeaway:

Physical AI is increasingly building on the broader simulation software layer. As each vertical trains and validates inside its own virtual environment, simulation is becoming one of the core infrastructure layers beneath the stack.

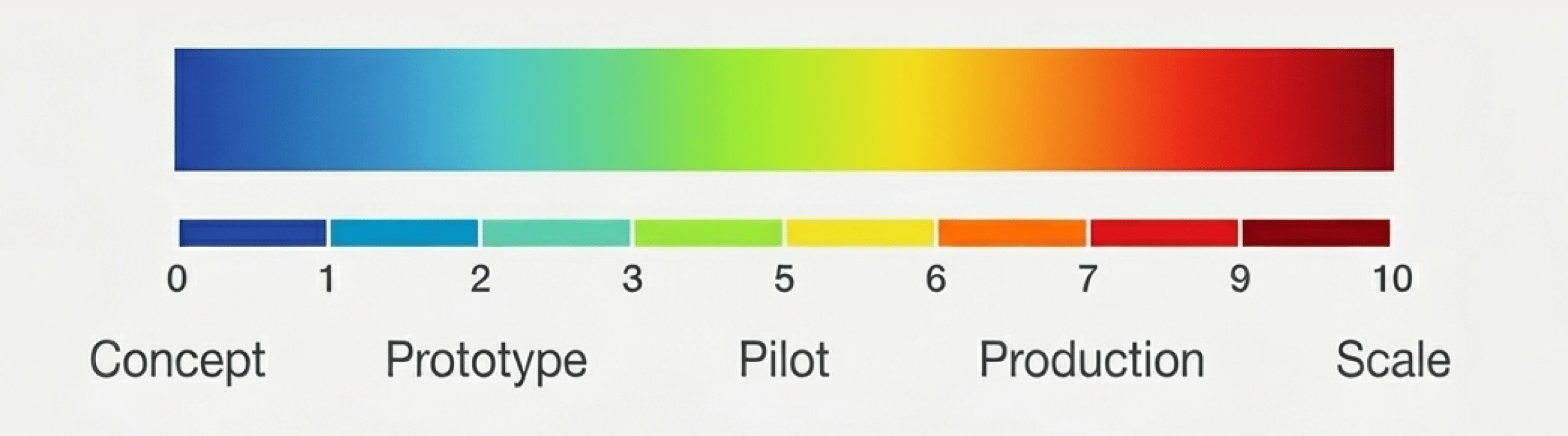

The Timeline Guide:

Each timeline and graph represents the realistic stage of the covered technology, plotted from concept to scale, capturing where it stands today and when broad deployment is likely.

What did you think of today’s issue?

Hit reply and tell us why; we actually read every note.

See you in the next upload