Welcome back, Spectrum Visionaries.

NVIDIA has released a model that enables robots to understand vision, language, and action within a single system. Carmakers are bringing physical buttons back as safety regulators push against touchscreen-only controls. Meanwhile, Qualcomm and NEURA are building a universal “nervous system” for Physical AI, as a new generation of vision systems adds a fourth dimension.

This issue explores universal robot controllers, the return of physical controls in cars, and why 4D vision may reshape how machines understand the real world.

THE AI UPDATE

NVIDIA Releases Vision-Language-Action Model to Control Any Robot

The chip giant unveiled Isaac GR00T N1.6 at CES 2026, an open VLA model designed to operate as a universal controller for humanoid robots. The system translates camera feeds and voice commands into physical movements.By integrating GR00T with Cosmos Reason, NVIDIA is enabling robots to understand ambiguous commands like "be careful with that" and translate them into context-appropriate movements. The 2B-parameter model trains on over 10,000 hours of robot data, moving humanoids from task-specific programming to general-purpose operation across industries.

Timeline: Released open-source in January 2026. Franka Robotics, NEURA, and Boston Dynamics are integrating GR00T workflows, with broader factory and warehouse deployment targeting 2028-2030 as hardware costs and safety standards evolve.

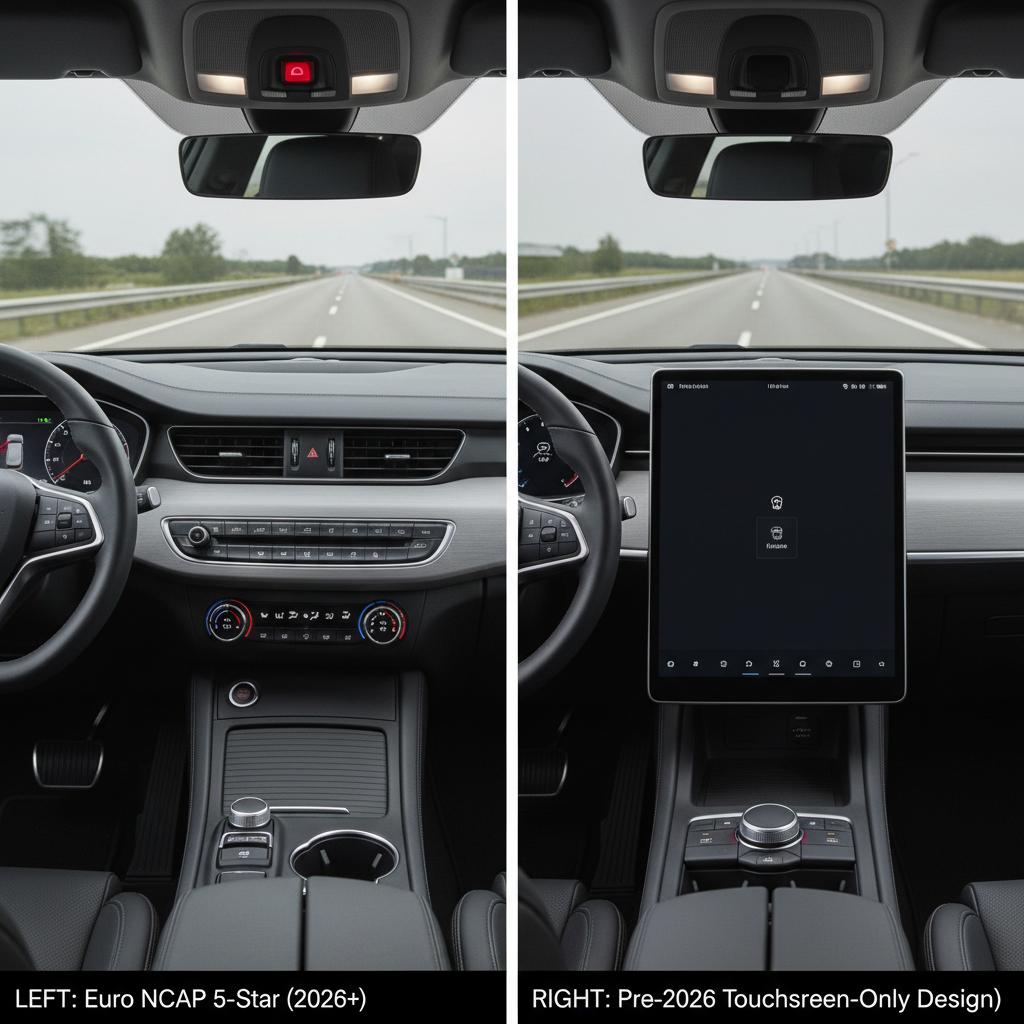

Physical Buttons Are Coming Back as Touchscreen Safety Data Emerges

Euro NCAP's 2026 protocols penalize touchscreen-only controls, challenging the industry's decade-long shift toward all-screen interiors. Swedish motoring tests showed that screen-based interactions can divert driver attention for 5 to 40 seconds.

The new five-star scoring deducts points for vehicles without physical turn signals, wipers, a horn, and emergency call controls. Every automaker that followed Tesla's Model S design philosophy, which eliminated physical buttons starting in 2012, now faces interior redesigns.

Timeline: Euro NCAP scoring began in January 2026, with Volkswagen and Hyundai restoring physical controls across their global lineups to protect their five-star ratings.

Qualcomm and NEURA Building a Universal "Nervous System" for Physical AI

The partnership is building the first universal "Nervous System" for Physical AI, a standardized blueprint that would let any AI system run the same software, regardless of hardware. The system pairs Qualcomm's Dragonwing IQ10 processors with NEURA's cognitive platforms, distributing AI processing between edge compute and sensor networks.

The design is intended to create an "app store" model for the physical world. Developers could write code once and deploy it across factory robots, surgical devices, or warehouse systems without rebuilding for each machine, similar to how iPhone apps work on any iPhone.

Timeline: Reference architectures sampling to developers in March 2026, targeting industry-standard adoption by 2028, enabling mass-market Physical AI systems across manufacturing and service industries through 2029-2030.

THE DEEP DIVE

What 4D Vision Means for Physical AI

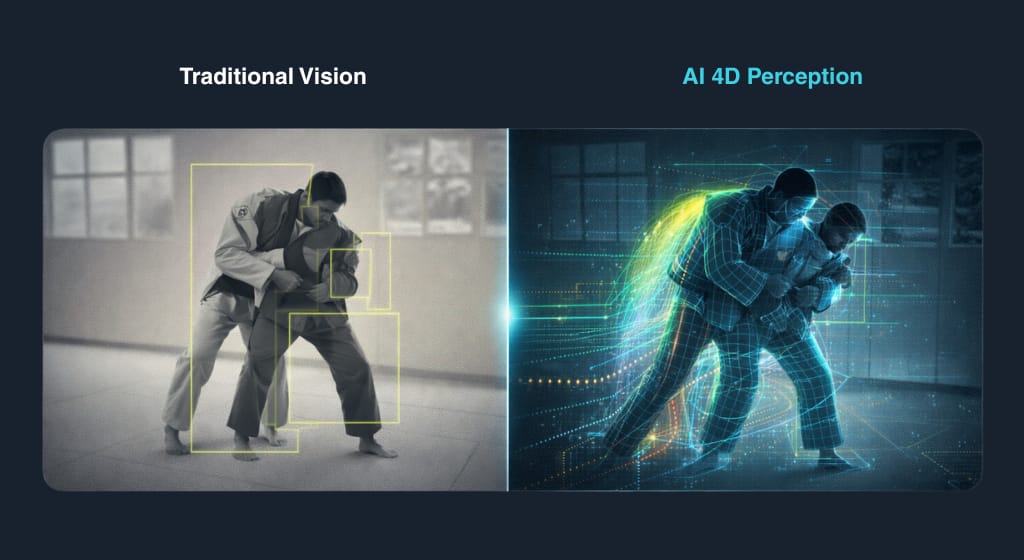

For decades, the Physical AI industry chased better motors, stronger grippers, and faster processors. The machines got better at moving, but never at understanding what they were moving and its context. 4D vision is changing that.

The 3D Limitation

Computer vision gave robots spatial understanding: height, width, depth. A robotic arm could identify a part in a bin, calculate the distance, and plan a path to grasp it. But 3D vision only captures a single frozen moment. If the part shifts while the robot moves, the system has to stop and recalculate.

This limitation drove the "controlled environment" requirements that defined early industrial automation. While advanced 3D systems with AI can now handle more variation, they still lack temporal awareness.

The Fourth Dimension

"4D vision" combines spatial data with temporal prediction. Instead of capturing a scene at one instant, these systems model how objects will behave over the next several seconds.

Apera AI's Vue system demonstrates this shift. When a package on a moving belt tilts mid-operation, the system predicts the new orientation, recalculates the approach angle, and adjusts the grip point while the arm is still in motion. The AI doesn't stop but adapts.

Visual Intuition

Systems can learn physics by watching video rather than processing raw footage frame by frame. Meta's V-JEPA 2 was trained on over a million hours of video, then fine-tuned on just 62 hours of robot footage.

The result: a robot that picks and places objects it has never seen, in labs it has never trained in, at 65-80% success rates in controlled settings.

From Automated to Autonomous

The gap between automated and autonomous depends on many factors, such as safety systems, edge computing, and sensor fusion, and 4D vision tackles a critical one: real-time adaptation to unpredictable environments.

This capability is opening the door to tasks that have resisted automation: surgical robots tracking live tissue, autonomous vehicles predicting pedestrian movement, and rescue robots navigating collapsed buildings.

Why It Matters: 4D gives Physical AI something called generalization, the ability to apply what it learned in one environment to another it's never seen. A robot that learns to handle a tilted box in a lab will be able to handle a shifting bag of flour in a grocery store, without any retraining.

THE MARKET

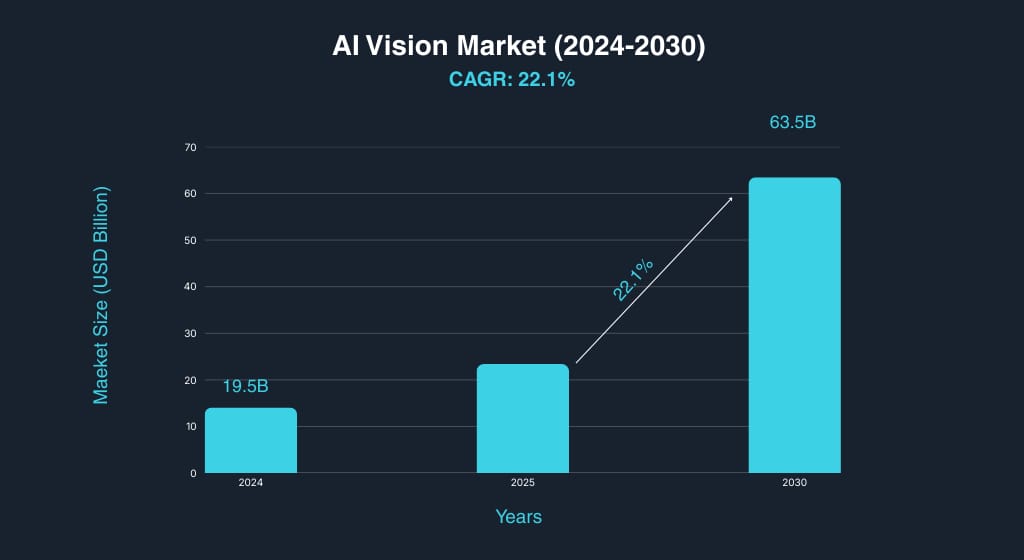

AI Vision Market to Reach $63B by 2030

Growth

The global AI computer vision market reached $19.5 billion in 2024 and is projected to reach $63.5 billion by 2030 at a 22.1% CAGR, driven by adoption across industrial automation, autonomous systems, and physical AI platforms.Pricing

Industrial 2D vision systems typically range from $1,000 to $3,000, depending on resolution and processing capabilities, while advanced 3D systems used for robotic guidance can reach $10,000 to $30,000 per unit.Adoption

Cognex has installed nearly one million vision systems globally, with automotive and electronics manufacturing driving the largest share of demand. The vision camera market alone is expanding from $4.1 billion in 2024 to $10.2 billion by 2030, as deployment expands into robotics, logistics, and physical AI platforms through the late 2020s.

Takeaway:

Market growth supports vision deployment across industrial automation, autonomous systems, and emerging physical AI platforms through the late 2020s as machines move from controlled factory settings into dynamic real-world environments.

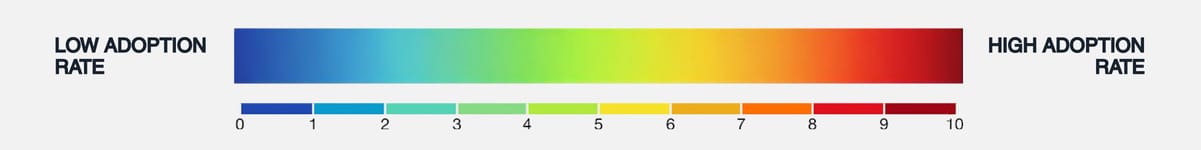

The Adoption Rate Guide:

Each timeline and graph represents the realistic adoption rate of the covered technology, plotted from low to high, capturing where it stands today and when broad deployment is likely.

What did you think of today’s issue?

Hit reply and tell us why; we actually read every note.

See you in the next upload