Welcome back, Spectrum Visionaries.

NVIDIA has announced a new blueprint for automating how Physical AI systems are trained. NASA's Perseverance completed the first AI-planned drives on Mars. Meanwhile, researchers at UT Austin and Microsoft are adding touch to the robot sensing stack.

This issue explores why writing rules is no longer enough for Physical AI, and what teaching systems at scale actually looks like.

THE AI UPDATE

NVIDIA Unveils Data Factory Blueprint

At GTC 2026, NVIDIA announced the Physical AI Data Factory Blueprint, an open architecture designed to automate the generation, curation, and evaluation of training data for robotics, vision AI agents, and autonomous vehicles.It uses Cosmos world models to convert limited real-world footage into large synthetic datasets, including rare edge cases that would otherwise take months to collect. It reflects an early industry shift from models to data pipelines. FieldAI and Teradyne Robotics are among the first adopters.

Timeline: Released at GTC 2026, with early adopters integrating it across robotics and autonomous-vehicle pipelines. Broader deployment targeting 2028 to 2030 as synthetic data tooling matures.

NASA's Perseverance Completes the First AI-Planned Drives on Another Planet

NASA's six-wheeled Mars rover completed the first drives on another planet planned entirely by AI, with no human route planners involved. A VLM studied the Martian terrain, identified hazards and mapped out a safe path, the same job human engineers have done manually for nearly three decades.

The demonstration covered 456 meters across two sessions, led by JPL in collaboration with Anthropic. This approach could reduce operator workload for deep-space missions where communication delays make real-time human guidance nearly impossible.

Timeline: Demonstration completed December 2025. JPL is evaluating AI-planned navigation for future missions, with broader deep-space application targeting the late 2020s as reliability improves.

Robots Are Learning to Feel What They Handle

Researchers at UT Austin developed FORTE, a tactile sensing system that enables robotic fingers to detect force and slip in real time. In lab conditions, tested across 31 objects, including a raspberry, the system achieved a 92% grasp success rate and detected 93% of slip events with zero false alarms.

The fingers use 3D-printed air channels inspired by fish fin structures, keeping manufacturing costs low. Hardware and algorithms are publicly available, with early research applications in food processing and electronics assembly.

Timeline: Published in IEEE Robotics and Automation Letters, March 2026. Lab-stage as of 2026, with real-world deployment in structured industrial settings targeting 2028 to 2030 as durability and generalization improve.

Microsoft Is Teaching Robots a Sense of Touch

The software giant announced Rho-alpha, its first robotics model derived from the Phi vision-language series, combining vision, language, and tactile sensing in a single system. While most robotics models rely solely on vision, Rho-alpha adds real-time touch feedback, enabling robots to perform contact-heavy tasks.

The model is in research early access, currently evaluated on dual-arm setups. Rho-alpha marks a move toward physical AI systems that combine multiple sensing modalities rather than relying on vision alone.

Timeline: Announced January 2026, Research Early Access only. Broader evaluation targeting 2027 as Microsoft expands the training pipeline and tests across additional robot platforms.

THE DEEP DIVE

The End of Scripted Automation

At Davos 2026, Jensen Huang said, "You don't write AI. You teach AI." He was specifically talking about why building systems that operate in the real world requires a different approach than anything the software industry has used before.

The Long Tail Problem

Traditional automation was built for repeatable tasks in repeatable environments, but Physical AI operates in none of those. A vehicle merging onto a highway or a drone landing on a moving platform cannot be fully scripted.

The deeper problem is the long tail. Rare, ambiguous, and dangerous scenarios cannot be collected safely or cheaply in the real world, and no programmer can write rules for situations too hazardous to observe.

The Teaching Solution

Instead of writing instructions, engineers show the machine what to do. A human demonstrates a task, and the robot learns to replicate and generalize from it.

When that is not enough, the system turns to reinforcement learning, attempting the task in simulation thousands of times, adjusting after each failure, until it builds its own policy.

Teaching at Scale

Training one system, one task at a time, is not scalable. Boston Dynamics layered reinforcement learning into Spot when real-world conditions grew too variable to model in code. The policy is no longer written in source code. It lives in a neural network shaped by experience.

NVIDIA's Data Factory Blueprint, announced at GTC 2026, automates training data generation, curation, and evaluation at scale. FieldAI is using it for unstructured field environments, and Hexagon Robotics is using it for industrial automation.

Why It Matters: Physical AI will scale when companies get better at teaching systems to handle conditions that fall outside the ideal. The next winners may not be the ones with the best hardware. They will be the ones with the best teachers.

Timeline: Physical AI teaching infrastructure is in early industrial deployment as of 2026, with simulation-driven pipelines expanding across robotics and autonomous vehicles through 2028 to 2030 as demonstration data quality and world model fidelity improve.

THE MARKET

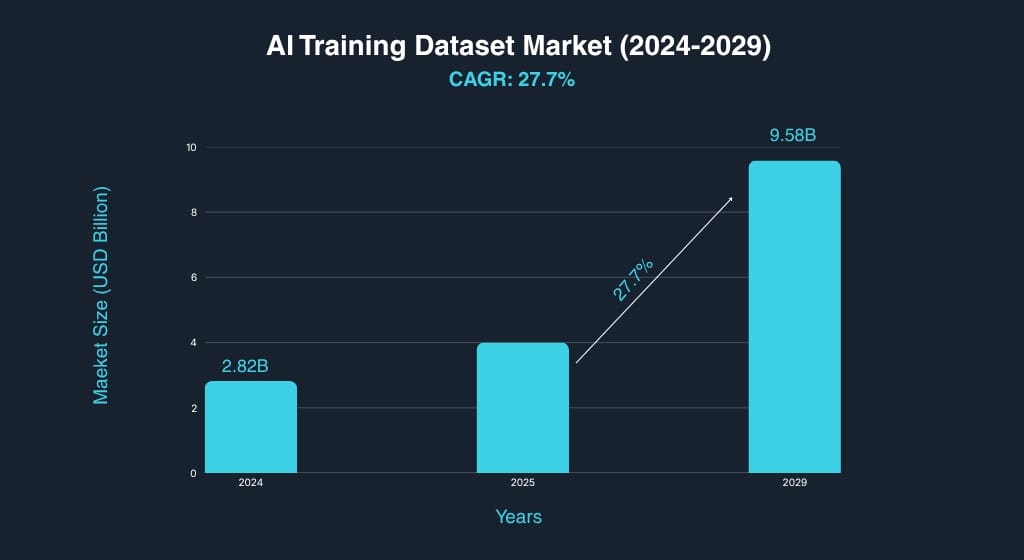

AI Training Dataset Market to Reach $9.6B by 2029

Growth

The global AI training dataset market reached $2.82 billion in 2024 and is projected to reach $9.58 billion by 2029 at a 27.7% CAGR. Synthetic data is emerging as a critical driver, enabling developers to generate rare-scenario data that is too dangerous or too expensive to collect in the real world.Pricing

A single teleoperation setup for collecting real-world robot demonstrations can cost roughly $25,000 or more per platform. Synthetic data pipelines running on cloud infrastructure remove that hardware requirement, making large-scale training economically feasible for the first time.Adoption

The global robotics market reached approximately $50 billion in 2025 and is projected to reach $111 billion by 2030 at a 14% CAGR. As deployment scales, simulation and synthetic data pipelines are becoming core components of the development stack.

Takeaway:

As AI moves from model development to real-world deployment, the infrastructure for teaching systems at scale is becoming one of the most important layers in the stack.

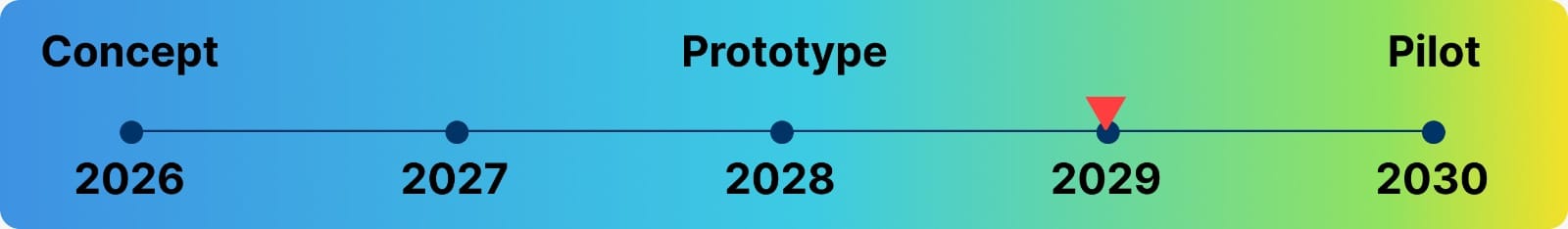

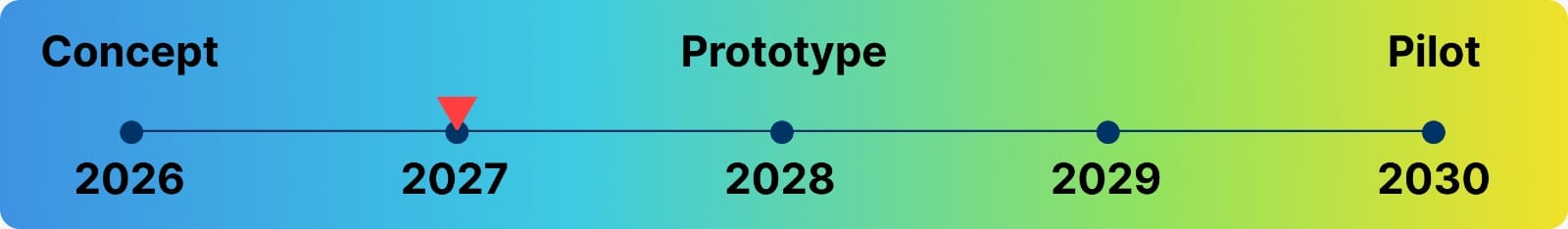

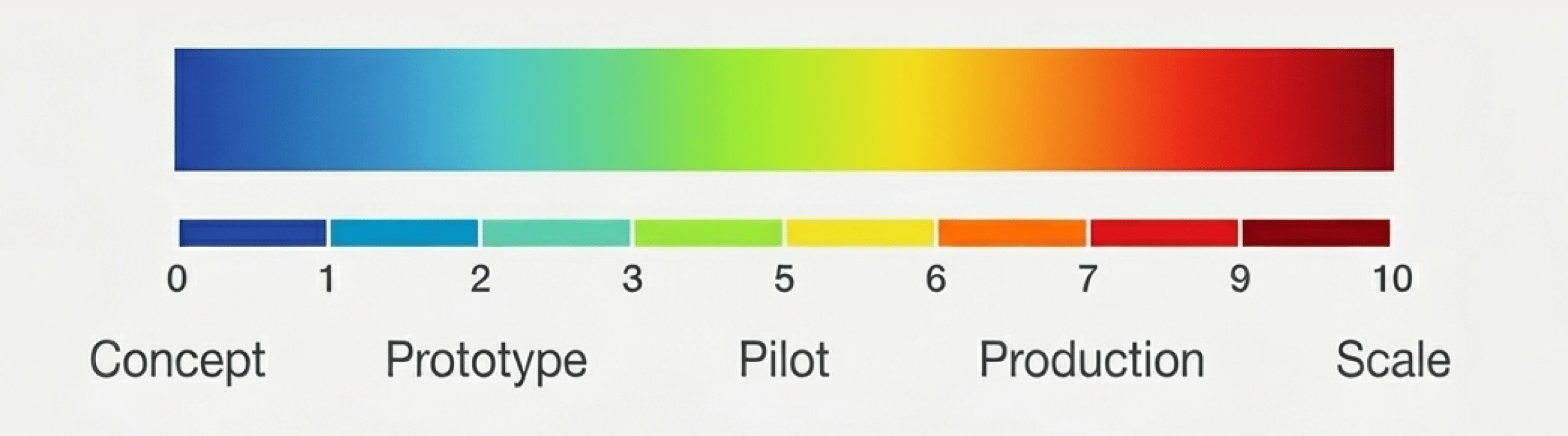

The Timeline Guide:

Each timeline and graph represents the realistic stage of the covered technology, plotted from concept to scale, capturing where it stands today and when broad deployment is likely.

What did you think of today’s issue?

Hit reply and tell us why; we actually read every note.

See you in the next upload