Welcome back, Spectrum Visionaries.

Humanoid robots are solving the uncanny valley by reading emotions, but "autonomous" systems still rely on humans more than companies admit. Meanwhile, OpenAI is taking personal AI agents mainstream by acquiring OpenClaw's creator, and regulatory pressure is pushing thermal vision to become standard equipment as autonomous vehicles require 24/7 object detection.

This issue explores how vision-enabled humanoids reduce the uncanny valley, the hidden “human in the loop” in AI autonomy, OpenAI's push into personal agents, and why your next car might have thermal vision.

THE AI UPDATE

Humanoid Robots Read Emotions to Solve "Uncanny Valley"

Realbotix demonstrated humanoids at CES 2026 that read human emotions through vision systems embedded in the robots' eyes. The system interprets facial cues and vocal tone, then adjusts its expressions to match the emotional context.

The shift brings emotionally appropriate responses that reduce the "uncanny valley" effect, positioning these humanoids for roles in customer service and healthcare. Tix4 is deploying multilingual agents at Las Vegas kiosks, with pilots running since mid-2025.

Timeline: Pilot deployments at kiosks beginning mid-2025, demonstrated at CES 2026, expanding to hospitality applications through 2027, targeting healthcare settings by late-2020s as emotional recognition capabilities mature.

Waymo's 'Autonomous' Taxis Phone a Friend Halfway Across the World

Waymo's CSO admitted in a Senate hearing that its "autonomous" robotaxis use remote workers in the Philippines to guide vehicles through unusual situations. Senator Ed Markey called the practice "completely unacceptable," citing input lag from workers halfway across the world as a safety issue.The disclosure reveals the "human-in-the-loop" pattern across AI systems marketed as autonomous. Tesla utilizes safety drivers, Presto also uses remote workers for drive-thrus, and Amazon needs human verification for Just Walk Out, showing that AI often relies on human labor more than companies disclose.

Timeline: Remote assistance remains core to operations across six US markets through 2027, with reduced human intervention targeting late-2020s as vision systems learn from edge cases.

OpenAI Hires Viral OpenClaw's Founder to Build Personal Agents

Peter Steinberger, creator of viral AI assistant OpenClaw (previously Clawdbot, then Moltbot after Anthropic's legal pushback), joined OpenAI to develop next-generation personal agents. The assistant gained a massive following by automating routine tasks such as scheduling, email management, and web research.Steinberger walked away from building a standalone company, saying OpenAI was "the fastest way to bring this to everyone." OpenClaw remains open source under a foundation backed by OpenAI for development.

Timeline: OpenClaw transitioning to foundation-led development in 2026, with OpenAI's personal agent platform targeting deployment through 2027-2028.

THE DEEP DIVE

Why Your Next Car May Have Thermal Vision

Autonomous vehicles can see cars and read signs, but they go blind at night when pedestrians are most vulnerable. Thermal imaging solves this by detecting body heat, enabling 24/7 detection.

The Detection Problem

RGB cameras rely on visible light, making nighttime pedestrian detection nearly impossible. LiDAR tries to solve this by mapping depth with laser pulses, but rain and fog scatter its beams, and it can't tell whether an object is alive or just metal. Both miss what matters most: identifying living beings.

The result: 77.7% of pedestrian fatalities occur at night despite only 25% of driving happening after dark. Wildlife collisions cause over 200 deaths annually, mostly on dark rural highways.

The Thermal Solution

The solution is to detect heat instead of light. Teledyne FLIR's Tura uses a 640×512 sensor to spot pedestrians and wildlife by measuring the temperature difference between skin and surroundings.

Unlike LiDAR, thermal doesn't need to illuminate anything. It passively detects existing heat, working through rain, fog, and complete darkness.

Working as a Team

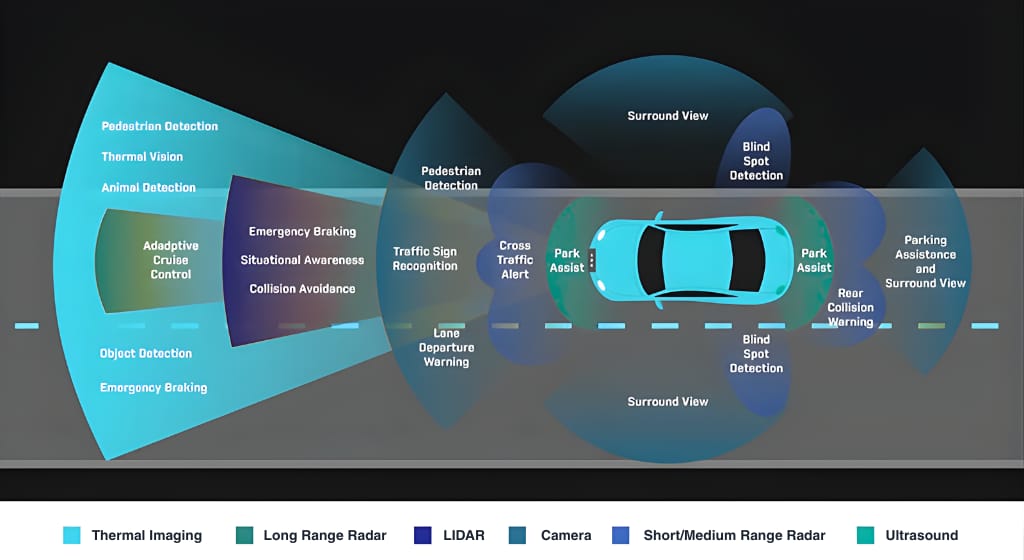

No single technology solves every problem. Autonomous vehicles don't rely solely on thermal, they combine multiple sensors.

Thermal detects people and animals but can't read traffic signals; standard radar measures distance but can't identify objects; RGB handles signs and markings but only in daylight.

Valeo's Northstar system combines them: thermal handles nighttime pedestrian detection for automatic braking, radar measures distance and velocity, and RGB reads traffic signals.

Why it matters: NHTSA's 2029 deadline for nighttime automatic emergency braking at highway speeds is forcing thermal to shift from being a premium option to becoming standard equipment. This would enable autonomous vehicles to detect pedestrians and wildlife regardless of lighting conditions.

Timeline: Thermal cameras entering production through 2026-2027, expanding to mass-market vehicles by 2027-2028 as NHTSA mandates take effect, with full sensor fusion targeting late-2020s as costs decline.

THE MARKET

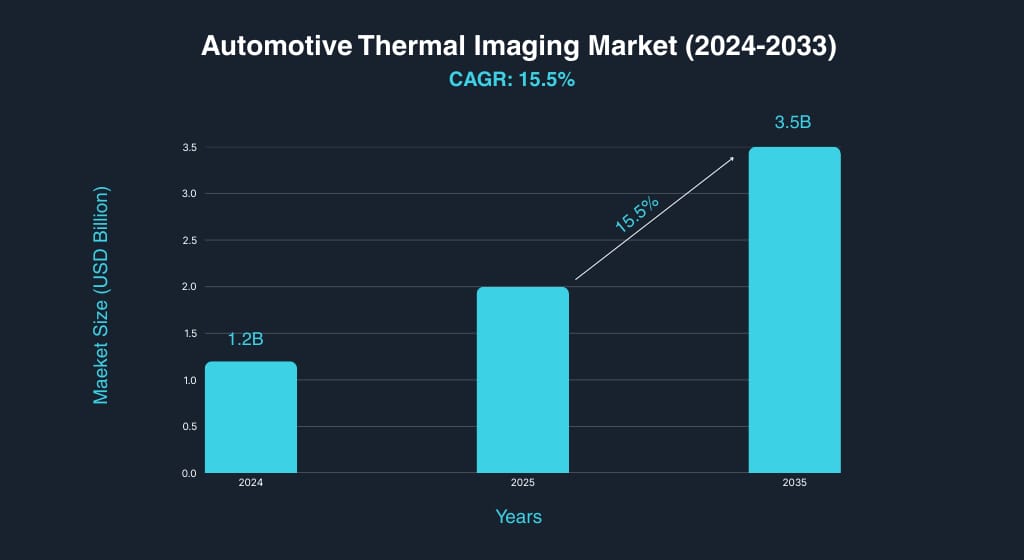

NHTSA Mandate Drives $3.5B Thermal Camera Market by 2033

Growth

The automotive thermal imaging market reached $1.2 billion in 2024 and is projected to hit $3.5 billion by 2033 at a 15.5% CAGR, driven by NHTSA's 2029 mandate for nighttime automatic emergency braking.Pricing

Thermal camera manufacturers are targeting $110-$165 per unit for mass-market viability. Teledyne FLIR has manufactured more than 1 million automotive thermal cameras over 20 years, with its ASIL-B compliant Tura module entering production in 2026.Adoption

The global automotive camera market (including thermal, RGB, and other sensors) is expanding from $4.80 billion in 2024 to $11.8 billion by 2031. Thermal integration is starting in premium vehicles through 2026-2027, expanding to mass-market segments by 2028-2029 for NHTSA compliance.

Takeaway:

Market growth supports thermal integration in premium vehicles through 2026-2027, expanding to mass-market segments by 2028-2029 for NHTSA compliance, while full sensor fusion targets Level 4 autonomy through the early 2030s.

What did you think of today’s issue?

Hit reply and tell us why; we actually read every note.

See you in the next upload